|

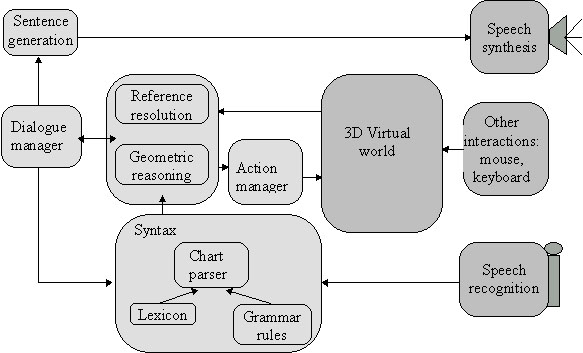

The conversational system takes the form of a agent that is incorporated within entities of the virtual world - here only a brain. The system's overall structure is similar to that of many other interactive dialogue systems (Allen 1994). It has been inspired by prototypes we implemented before (Nugues 1993) and (Godéreaux 1994, Nugues 1996). It features speech recognition and speech synthesis devices, a syntactic parser, semantic and dialogue modules. The system's architecture is also determined by the domain reasoner and the action manager. The system capabilities were designed to be relatively specific. They concern navigation, manipulation, and some queries on the state of the world (At present only navigation is implemented). The system assists the user within the world by responding positively to these commands. Understanding navigation commands requires to resolve references that occur in the conversation and to reason about the geometry of the world. The system architecture will be complemented by a reference resolver to work in coordination with the user's gestures enabling her/him to name and point at objects. Navigation or manipulations are completed by an action manager that carries out relatively continuous motion to bring the user where she/he wants to go or to animate the brain.

System Architecture The final programm running for the performance was written by Matthias Ludwig. |